| Tool | Capability |

|---|---|

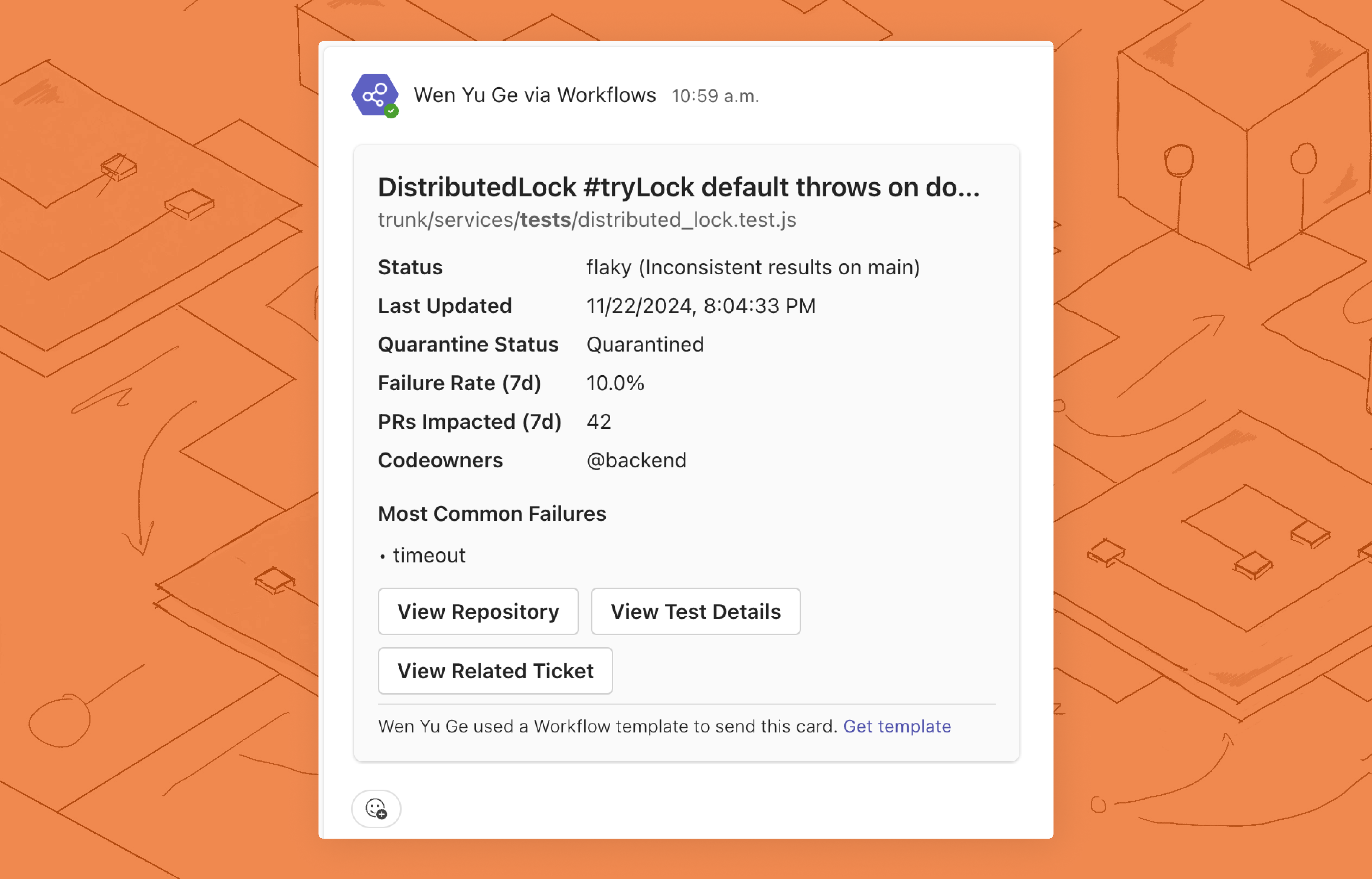

fix-flaky-test | Experimental: Retrieve insights around a failing/flaky test |

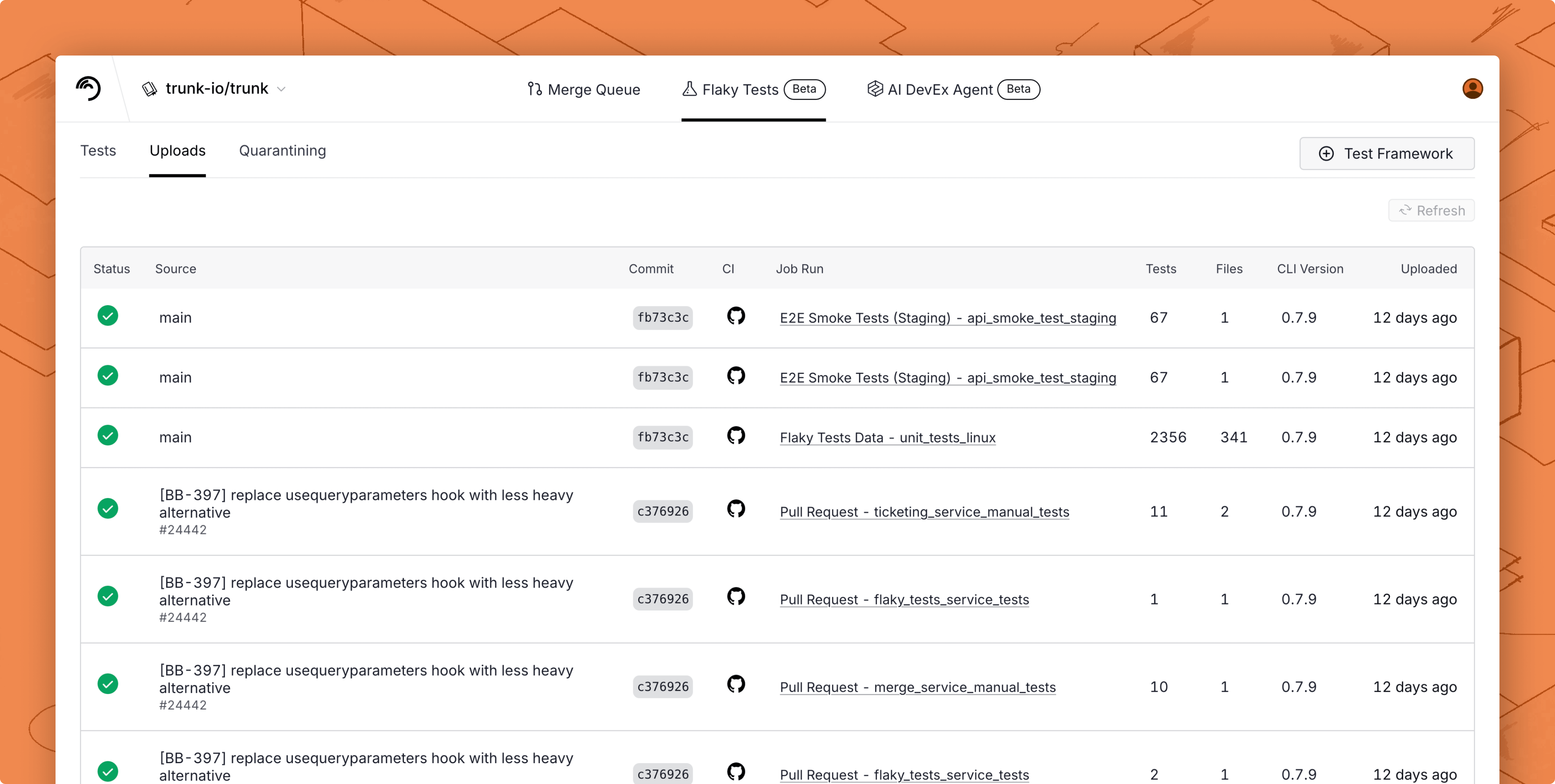

setup-trunk-uploads | Experimental: Create a setup plan to upload test results |

| Tool | Capability |

|---|---|

fix-flaky-test | Experimental: Retrieve insights around a failing/flaky test |

search-test | Experimental: Look up a test case ID by test name |

investigate-ci-failure | Experimental: Investigate a failing CI run |

setup-trunk-uploads | Experimental: Create a setup plan to upload test results |

.png?alt=media)