- Python

- OpenCV

- Tensorflow-GPU (depending on GPU availability)

- NVidia CUDA Computing Toolkit (depending on GPU availability)

FER_Modified : https://www.kaggle.com/srinivasbece/fer-modified

- Syed Qausain Huda

- Swadha Kumar

- Saunak Das

- Aditya Raj Singh

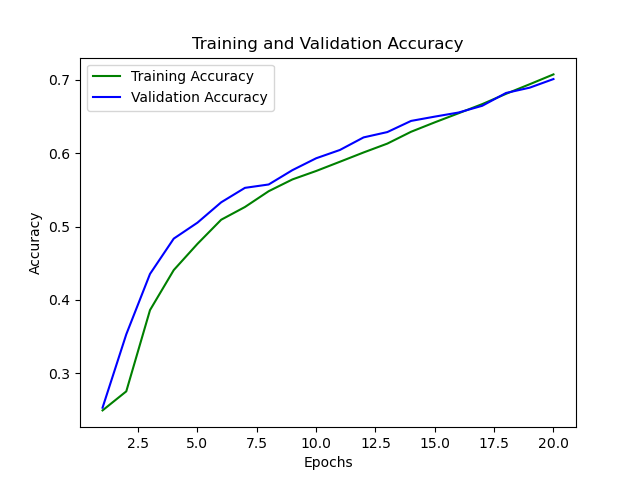

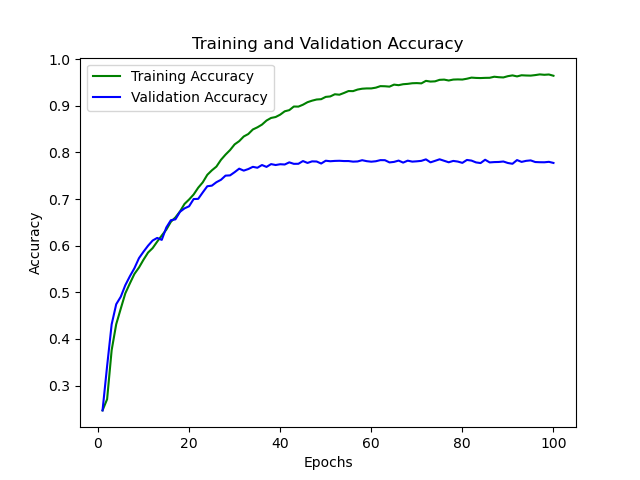

This is an implementation of Convolutional Neural Network architecture for detecting emotions in human faces, provided either as a static image or as a video from webcam. The model is trained beforeuse, and then it is provided the images for predicting. The prediction value is passed back, which decides on the emotion being displayed by the person. Our model has one input layer, 4 hidden layers, and two fully-connected neural networks(FCNNs). Through this, we were able to achieve around 97% testing accuracy and around 78% validation accuracy. We use Tensorflow and Keras functions and models for our purpose.

- Our training was done only on static images only having the faces. We can have our model trained with videos.

- Our model currently is not great with side profiles. We can either train it using side profiles as well, or use better libraries for the purpose.

- Our model does not work properly in cases of bad lighting. We need to work on that.

- We can implement this model for other forms of media as well, such as audio, video, text.